Why test ad copy: boost ROI with smarter campaigns

Posted on

Marketing

Posted at

Regular ad copy testing is essential for maximizing ROI and reducing wasted budget.

Structured, ongoing A/B testing helps identify effective messaging elements, boosting click-through and conversion rates.

Long-term success relies on continuous learning, proper analysis, and scaling winning creatives systematically.

Most businesses running paid ads on Google or Meta are leaving real money on the table. Not because their targeting is wrong or their budget is too small, but because they never systematically test their ad copy. Untested messaging is a silent budget drain. You keep spending, the campaigns keep running, and you never know whether a different headline or call to action could have doubled your conversions. This article breaks down what ad copy testing actually means, why it directly impacts your ROI, and how to build a repeatable testing process that compounds results over time.

Table of Contents

Key Takeaways

Point | Details |

|---|---|

Purpose of testing | Ad copy testing reveals what truly drives clicks and sales so you can maximize campaign ROI. |

Key metrics matter | Measure ROAS and CPA in addition to CTR for a complete view of campaign success. |

Test design essentials | Always run tests for at least one week, test one variable at a time, and avoid peeking at early results. |

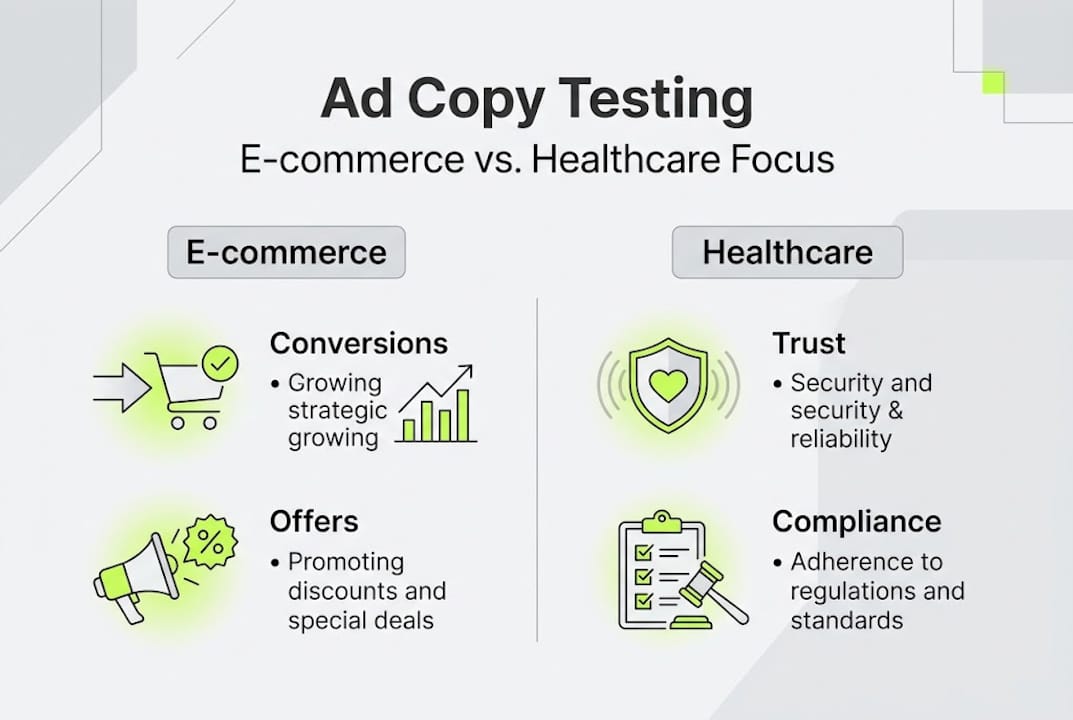

Industry considerations | Healthcare ads require extra trust and compliance testing, while e-commerce can focus more on offer clarity and urgency. |

Scale and repeat | Document lessons, build on successes, and make ad copy testing a regular habit for sustained ROI growth. |

What does it mean to test ad copy?

Ad copy testing is the practice of comparing different versions of your ad messaging to find out which one performs best with your target audience. It sounds straightforward, but it is one of the most misunderstood activities in performance marketing.

The biggest misconception? That testing is a one-time event. Many teams run a quick split test, pick a winner, and move on. That is not testing. That is guessing with extra steps. Real A/B testing in ads is a structured, ongoing process of forming hypotheses, isolating variables, and collecting enough data to make confident decisions.

Here is a quick breakdown of the core concepts:

Variant: A version of your ad that differs from the original in one specific way

Control: The original ad you are comparing against

Variable: The single element you are changing, such as the headline, CTA, or description

A/B test: A direct comparison between two variants

Multivariate test: Testing multiple variables simultaneously across several ad versions

Understanding what is ad copywriting is the foundation before you test anything. If you do not know what each element of your copy is doing, you cannot isolate what to change.

"Testing is not about proving your instincts right. It is about letting your audience tell you what works."

On timing: test duration should be at least 7-14 days, with 1,000 to 5,000 impressions per variant before drawing any conclusions. Running a test for two days and calling a winner is one of the fastest ways to make the wrong call.

Why testing ad copy is critical for ROI

Now that the foundation is clear, let's talk about money. Specifically, what systematic ad copy testing does for your bottom line.

When you test copy consistently, you learn exactly what drives clicks, conversions, and purchases. Without it, you are optimizing blindly. You might improve your bidding strategy or tighten your audience targeting, but if your message does not resonate, none of that matters.

Here is a direct comparison of what campaigns look like with and without regular testing:

Metric | Without testing | With regular testing |

|---|---|---|

Click-through rate | Flat or declining | Steadily improving |

Cost per acquisition | Unpredictable | Controlled and decreasing |

ROAS | Inconsistent | Compounding upward |

Budget waste | High | Minimized |

Optimization speed | Slow | Accelerated |

The four key results that ad copy directly influences:

CTR (click-through rate): Better copy gets more clicks for the same spend

CPA (cost per acquisition): Relevant messaging attracts buyers, not browsers

ROAS (return on ad spend): Higher quality traffic converts at better rates

Revenue: The ultimate output of all of the above working together

Skipping tests also creates a compounding risk. You run the same copy for months, cutting ad waste becomes impossible because you have no baseline to compare against. And if your results start slipping, you have no data to explain why.

One critical warning: peeking at results early inflates false positives by up to 30%. That means if you check your test results before reaching your target sample size, you are likely to make a decision based on noise, not signal.

Pro Tip: Before launching any test, write down your success metric. Is it CPA? ROAS? Conversion rate? Define it in advance. This removes the temptation to shift the goalposts once results start coming in. Optimizing ad campaign ROI starts with knowing what you are actually optimizing for.

Best practices for effective ad copy testing

Knowing why testing matters is one thing. Running tests that actually produce reliable, actionable data is another. Here is the framework we use and recommend.

Step-by-step testing process:

Form a hypothesis: Start with a specific, testable assumption. Example: "Adding a price anchor in the headline will increase CTR by 15%."

Select one variable: Headlines, CTAs, descriptions, and social proof are all valid options. Pick one per test.

Set up your variants: Create your control and at least one variant that changes only that variable.

Define your run time: Commit to a minimum of 7 to 14 days and a minimum impression threshold before reviewing results.

Analyze with context: Look at ROAS and CPA, not just CTR. A high CTR with poor conversions means your copy attracted the wrong audience.

Test one variable at a timeand pre-qualify your hypothesis with analytics before running the test. If your data already shows that mobile users convert at half the rate of desktop users, factor that into your test design from the start.

Pro Tip: Headlines are typically the highest-impact variable to test first. They are the first thing your audience sees, and even a small improvement in headline performance can lift your entire campaign's efficiency. Learn more about the A/B testing process to set up your first test correctly.

Avoid two common pitfalls. First, do not run underpowered tests. A test with 200 impressions per variant tells you almost nothing. Second, do not confuse correlation with causation. If your test runs during a holiday weekend, external factors may be skewing the results. Always note what else was happening during your test window. Proper tracking performance metrics is what separates guesswork from real optimization.

Ad copy testing for ecommerce vs. healthcare

The core testing principles apply across industries, but the priorities and constraints differ significantly between e-commerce and healthcare. Knowing these differences helps you design tests that are both effective and compliant.

Element | E-commerce | Healthcare |

|---|---|---|

Primary testing goal | Maximize conversions and ROAS | Build trust and drive qualified leads |

Key variables to test | Price anchors, urgency, offers | Trust signals, credentials, tone |

Compliance risk | Low to moderate | High |

Audience intent | Purchase-ready | Research and consideration phase |

Tone | Persuasive and direct | Empathetic and informative |

For e-commerce, you have more creative freedom. Test aggressive CTAs, limited-time offers, social proof numbers, and product-specific claims. The feedback loop is fast because purchases happen quickly.

Healthcare is a different environment. Healthcare organizations require trust signals and compliance considerations in every ad, which means your testing variables must be chosen carefully. You cannot test claims that could be seen as misleading or unsubstantiated.

Variables worth testing in health-focused ad copy:

Credential mentions ("Board-certified," "Licensed provider")

Empathy-driven vs. outcome-driven headlines

Specific vs. general service descriptions

Patient-focused language vs. clinical terminology

Call-to-action phrasing ("Book a consultation" vs. "Get started today")

For improving ad copy effectiveness in healthcare, the goal is not just performance. It is building enough trust in the ad itself that the right patient takes the next step. Compliance and performance are not opposites. They are both requirements.

Interpreting results and scaling winners

Running a good test is only half the work. Reading the results correctly and acting on them is where most teams fall short.

First, check for statistical significance before declaring a winner. A result is statistically significant when you can be confident it reflects a real difference in performance, not random variation. Most ad platforms will flag this, but do not rely on the platform alone. Cross-reference with your actual business metrics.

Peeking inflates false positive rates, so commit to your predetermined sample size before making any decisions. This is non-negotiable.

When a variant wins, here is what to do next:

Implement the winner as your new control across the campaign

Document what worked and why you think it resonated

Segment the results by device, audience, or placement to find hidden patterns

Start the next test immediately, using the winner as your new baseline

Archive the losing variant with notes for future reference

Pro Tip: Build a "winning copy bank." Every time a variant wins, add it to a shared document with the test context, the metric it improved, and the margin of improvement. Over time, this becomes one of your most valuable creative assets. It also speeds up future campaign launches because you are starting from proven copy, not a blank page.

Scaling winners means pushing more budget behind what is already working, while simultaneously running the next test. This is how ad spend optimization actually compounds. You are not just finding one good ad. You are building a system. Use track ad metrics consistently to keep your scaling decisions grounded in data.

A fresh look: Why most businesses stop short with ad copy testing

Here is what we see repeatedly: a business runs one or two tests, finds a winner, and then stops. They treat ad copy testing like a project with a finish line. It is not. It is a process with no ceiling.

The compounding effect of regular testing is real. A 5% improvement in CTR this month, a 7% drop in CPA next month, and a 10% lift in ROAS the month after that. These are not dramatic single wins. They are incremental gains that stack into major performance shifts over a quarter or a year.

Every campaign you run without a test is a missed opportunity to learn something. And in paid advertising, knowledge is budget efficiency. The teams that win long-term are the ones who treat every ad as a data point, not just a creative output.

For deeper ad copy insights, the shift in mindset is simple: stop thinking about testing as extra work. Start thinking about it as the work. Document every test. Put winners back into circulation. Build on what you know. That is how you close the gap between average campaigns and exceptional ones.

Supercharge your campaigns with expert ad copy testing

If you are ready to stop guessing and start building a testing system that actually moves the needle, we can help. At A&T Digital Agency, we design and manage data-driven ad copy testing frameworks across Google and Meta, built specifically for e-commerce and healthcare businesses that need results, not just activity.

Our Google Ads management and Meta Ads management services include structured copy testing as a core part of every campaign. We do not run ads and hope for the best. We test, learn, and scale what works. If you want a team that treats your ad budget like it matters, let's talk.

Frequently asked questions

How long should you run an ad copy test for reliable results?

Run your ad copy tests for at least 7-14 days, with 1,000 to 5,000 impressions per variant to ensure statistically valid data. Shorter tests risk decisions based on random fluctuation rather than real performance differences.

What metrics should you use to evaluate ad copy tests?

Prioritize ROAS and CPA over click-through rate alone. CTR tells you if people clicked, but ROAS and CPA tell you if those clicks actually generated value for your business.

How do you choose what to test in your ad copy?

Start with headlines first, since they have the highest impact on initial engagement. Test only one variable per round to isolate what is actually driving the change in performance.

Why avoid peeking at test results before the test ends?

Checking results too early inflates false positive rates and can lead you to pick a winner that is not actually better. Commit to your sample size before reviewing any results.